Web 3.0 is coming soon…

Linking

IMHO the Web 3.0 revolution will consist of websites and web apps from the 2.0 era becoming closer.

I think that it will become easier to link together content across web sites to create new forms of content.

In the Web 2.0 revolution was helped by blogs with authors linking together information in posts. (This I might add has been very useful to combat the slew of dodgy sites that sit high in Google’s results but just spit back the search terms as results, nullifying your search. Nowadays I find use ‘blog’ in search terms, especially when looking for reviews.)

I can’t wait until someone puts together a really good way of visualizing all this data. As the internet grows the importance of being able to sift through the available data and collate it into collections on particular topics is becoming paramount.

I have been looking out for a system to visualize my internet links in some kind of subject oriented way with a timeline / time axis. So far the only thing that comes close is Basket Notes for KDE (screenshots). If only that were a web app! (if i had the motivation and focus, I’d turn my meagre php programming skills to that task myself, but alas like my sketched design for a social networking site written in my design book pre the advent of facebook, I think I’ll leave it to someone else!)

I guess the closest web based similar system (I’m aware of) currently in operation is Wikipedia!

Retrieval

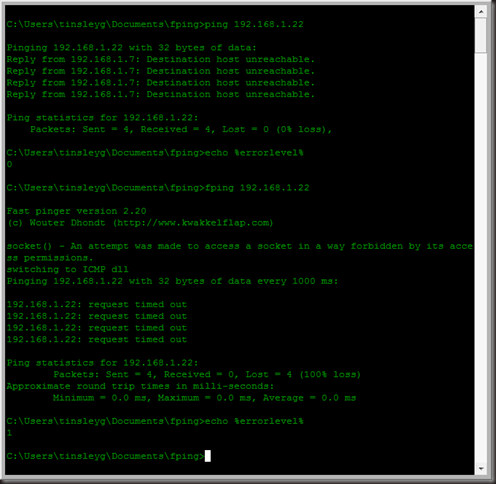

Look at the useful plugin Ubiquity, and the fantastically useful cross platform application and search launcher, Launchy for example. Both of these are designed to give us quicker access to and search abilities for our data.

Workflow

Making computers integrate seamlessley to our lives rather than inturpting them.

Today the focus of computing is shifting from _ to the workflow -how we get things done. I think this is essential because your average end user doesn’t care how things get done, just as long as they can get done.

Digital Photographers often use a prescribed workflow when working on digital photos – ‘developing them’ as it were to bring out the best. PCPro Magazine suggests 1. Levels and Curves then 2. Colour adjustment followed by Sharpening. But I’m talking more than just the best sequence of events to achieve the best quality output. I’m talking about the process itself.

Our brains think sequentially, each action is broken down step by step and steps performed one after another. A break in our concentration, or ‘flow’ impacts our effectiveness. This is especially true for people with ADHD (like me). Reducing the need for context switching.

“Consider that it takes 15 minutes for a developer to enter a state of flow. If you were to interrupt a developer to ask a question and it takes five minutes for them to answer, it will take a further 15 minutes for them to regain that state of flow, resulting in a 20 minute loss of productivity. Clearly, if a developer is prevented from flowing several times during the day their work rate declines substantially. “

(Retrieved from http://softwarenation.blogspot.com/2009/01/importance-of.html)

For example, downloading pictures from your digital camera and uploading them to facebook. Recently I’ve been using ‘Windows Live Photo Gallery’. Ugh, I know, but the point is it that Vista offered it to me, and it was an easy to find and add plugin that allows me to upload direct to facebook, where most of my photos end up these days.

To download the pictures I simply flip out the SD card from my camera, and insert it into my laptop (useful laptop buying advice)’s SD card slot

And that’s the point, people will take the path of least resistance/effort.

Path of least effort Principle

According to my observations

like people walking down the high street striving to avoid collision with other pedestrians, my observation leads me to believe that everybody is operating on the principle of least effort, where the person you are approaching will attempt to take a path that will need the least amount of diversion from their original path in order to avoid collision, while you yourself will attempt to do the same thing.

how does this come back to web 3.0?

How many clicks does it take while searching for some long forgotten but relevant piece of information before a user will get bored and move on? [research advertising, google hotspots, number of clicks] Could it be as low as 3, and as high as 8?

Unified User Interface

Facebook for example. I was trying to find my note on laptops to include a link in this article, but alas my click on Notes from the home page only brought up a ‘feed’ of Notes. Where I ask is the Filter options that preside on everyone’s profiles? Why can’t I select ‘Just Garreth’ here too?

If something like that is useful, it should also be Unified, that is available everywhere!

In the time it took me to discover the ‘workflow’ to access my notes in this ‘fast/bitesize/information obsessed’ age my poor overloaded ADHD (video: ADHD impact on life) brain might easily have become bored frustrated and more importantly distracted and moved on…

Availability

Cloud computing and Rich Web Applications (Blog: Google and Rich Web Application)

Organisation of Data

TOC

Concise

It’s an inverse law – As our attention spans decrease, so the conciseness of the data we consume must increase ceterus paribus.

Why do my spidey senses tell me facebook, not google may be the winner in the Web 3.0 revolution?

- Reduce the need for context switching

- Make data transfer between devices, programs and operating systems simpler and more unified

- Make data easier to locate and retrieve

- Make locating an open program/context switching easier and more natural – in doing so reducing the impact on flow by automatically knowing how to get back to the other program/where it is.

- Design and create more natural interfaces – e.g the Apple’s iPhone and iTouch.

- Consider how context switching works in our heads and apply this to UI.

- Work on unified User Interfaces as not to interupt flow

What do you think? Leave some comments of your vision, and what you think of my ideas.